How AI Voice Agents Are Transforming Customer Engagement at Scale

AI voice agents are production-grade systems handling inbound calls, outbound campaigns, and patient communications at scale. Businesses that deploy them with sound dialogue design, tested fallback logic, and a structured post-launch optimization cycle see measurable reductions in handling time, no-show rates, and cost per interaction, while freeing human teams for higher-value work.

The global AI voice agents market reached $2.54 billion last year. As per Grand View Research, that number is projected at $35.24 billion, growing at a CAGR of 39% by 2033.

These are not forecast numbers from a market still proving itself. They reflect capital flowing into deployments that are already producing results. For technology and operations leaders, the implication is clear. The businesses that figured this out 18 months ago are not waiting for you to catch up.

Generate

Key Takeaways

Generating...

Generate

Key Takeaways

Generating...

-

Most businesses underestimate how much unresolved calls cost them.

- The integration layer matters more than the voice quality does.

- Healthcare voice deployments pay back faster than almost any vertical.

- Restaurants need acoustic calibration, not just a plugged-in agent.

- Generic platforms fail where custom dialogue design succeeds consistently.

- Post-launch optimization is where most of the rea value sits.

What Is an AI Voice Agent (And What It Is Not)

An AI voice agent is a software system that conducts spoken conversations with humans in real time, without a live agent on the other end. It listens, processes intent, and responds within milliseconds. Not "as fast as a human." Faster, and without a hold queue.

Four core layers make this possible.

1. ASR (Automatic Speech Recognition): It converts audio to text in real time. Modern engines from Deepgram, OpenAI Whisper, and Google handle accents and ambient noise at an accuracy level that legacy telephony systems never reached.

2. NLU (Natural Language Understanding): It extracts intent and entities from that text. A caller saying, "Can I push my 10 AM to Thursday" is understood as a reschedule request with specific time entities, mapped against the context of the conversation in memory.

3. Dialogue Management: It decides what happens next based on what the agent knows and what it still needs. Does it have enough to act? Does it need to ask something? Should the call escalate?

4. Back-end Integration: This layer is where the call actually resolves. The agent connects to your CRM, EHR, booking system, or order platform in real time and does something with the conversation. Without this layer, the agent is a conversational interface that leads nowhere.

Also Read: For a detailed technical breakdown of how these components connect, check out this guide on building an AI agent.

Which AI Voice Agent Is Right for Your Business?

The right answer depends on your call types, your existing systems, and what your escalation path actually requires. The table below maps the deployment approach to business type and use case:

| Business Type | What a Custom Build Covers | Primary Outcome |

| Small businesses | Dialogue flows built on real call data, CRM connector, and tested escalation path | Operational in weeks, performing from day one |

| Mid-market | Multi-flow architecture, ticketing and scheduling integration, outbound campaigns | Inbound deflection and outbound automation in one deployment |

| Enterprise | Multi-system integration, compliance architecture, full analytics, optimization cycles | Contact center cost reduction at measurable scale |

| Healthcare providers | HIPAA-compliant build, EHR sync, PHI handling, 24/7 availability | Scheduling automation, no-show reduction, post-discharge outreach |

| Restaurant chains | Acoustic-calibrated ASR, POS integration, multi-location deployment | Consistent phone order handling across every location at peak hours |

The build vs. Buy Decision for AI Voice Agents

Generic platforms get a basic agent live quickly. That is their advantage and the limit of it. Pre-built dialogue flows do not reflect how your customers phrase requests. Standard integrations do not account for how your CRM data is structured. And when edge cases appear in production, a platform offers documentation.

Here, a custom deployment offers a fix. Building custom means working with a partner who scopes dialogue logic to your actual call data, integrates with your specific systems, and stays accountable through the optimization cycle.

For businesses that cannot absorb a failed deployment, the cost of getting this wrong the first time is higher than the cost of building it correctly.

Now, let’s explore the two major sectors where AI voice agents are making the biggest impact.

AI Voice Agents in Healthcare: Where the Operational Case Is Strongest

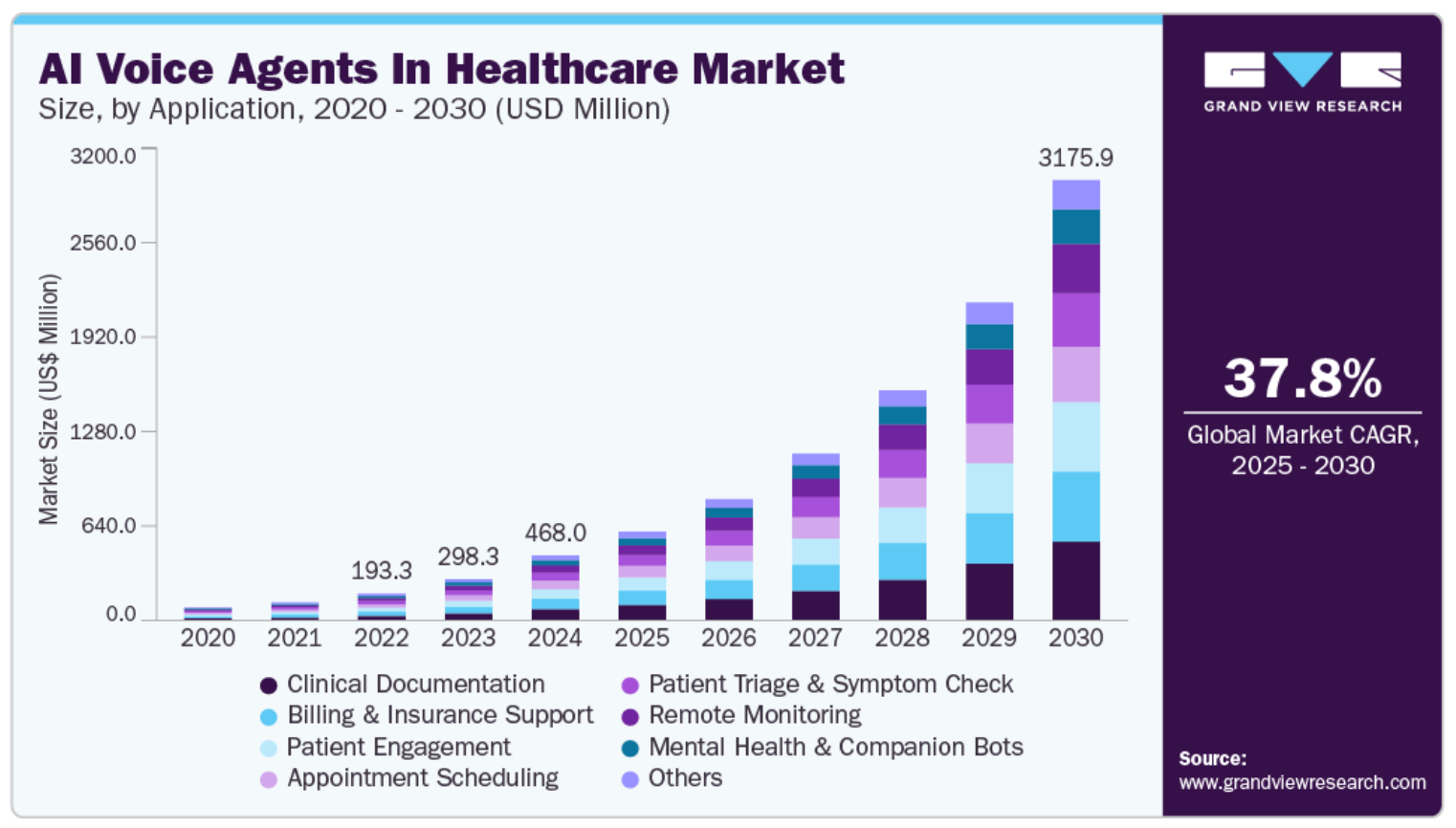

The global AI voice agents in the healthcare market is projected to reach $3.18 billion by 2030, per Grand View Research. This 37.79% CAGR reflects deployment velocity, not projections built on hypothetical adoption.

Healthcare businesses run on phone calls. From appointment reminders, prescription follow-ups, and discharge check-ins to insurance intake. None require clinical judgment, but all require consistent availability across hours that staffed phone lines do not cover without significant cost. That is precisely why this vertical has moved from pilot to permanent deployment faster than almost anywhere else.

A clinic fielding 300 scheduling calls a day cannot cost-effectively staff a phone line around the clock. But a trained voice agent can do it at a fraction of the per-interaction cost.

The use cases below show where healthcare organizations are seeing the clearest returns:

1. Appointment Scheduling and Rescheduling: This process is the highest-volume entry point for most healthcare deployments. Voice agents handle inbound scheduling end-to-end, including mid-call rescheduling and cancellations. The bigger gain often comes from outbound reminder calls with rescheduling capability built directly into the conversation. Early adopters have reported a no-show rate reduction of 30-35% through this approach.

2. Post-Discharge Follow-Up: Patients contacted within 48-72 hours of discharge are significantly less likely to be readmitted. For a hospital managing 10,000 annual discharges, a 10% improvement in 30-day readmission rates translates to millions in avoided costs. Most healthcare systems still handle this manually when they do it at all.

3. Prescription and Preventive Care Reminders: High-volume, low-complexity outbound calls that consume staff time without requiring clinical input. Automating them removes a predictable daily load from phone queues and returns that time to clinical work.

4. Pre-Appointment Insurance Verification: Tedious, necessary, and almost never requiring a human. A voice agent runs this intake at any hour and writes data directly to the patient record. Discrepancies get flagged before the appointment day, not discovered at check-in when the patient is standing in front of a receptionist.

5. HIPAA Compliant Agent: Every deployment in this vertical requires a signed BAA with the platform, PHI handling controls, encrypted transmission, and audit logging. This is not a box to check after the feature evaluation; it is the starting point.

AI Voice Agents for Restaurants: A More Complex Deployment Than It Appears

The case for automating phone ordering in restaurants is straightforward. Every location handles the same four or five call types hundreds of times a week: orders, reservations, wait times, and menu questions. The volume is predictable, and the queries are repetitive, and voice agents should handle this well.

They do, but only in well-designed deployments. Restaurants are acoustically hostile environments, and the teams that skip acoustic calibration find out what that means after go-live, not before.

1. Acoustic Model Calibration for the Real Environment: This is the step that gets omitted most often and causes the most post-launch problems. An agent trained on clean studio recordings underperforms in an open kitchen during dinner service. Platforms that support model fine-tuning on recorded call data from the actual location perform consistently better than those that do not. Before committing to any platform for a restaurant deployment, confirm whether this capability exists.

2. POS Integration That Actually Closes the Call: An agent that confirms availability, reads back order totals with modifiers applied, and confirms pickup time within the same conversation is useful. One that captures an order and says someone will call back to confirm is not a deployed solution. It is a call routing system with more steps. The POS integration determines whether the deployment has operational value.

3. Hard Escalation Points for Complex Orders: Attempting to process a ten-item customized order with multiple substitutions and allergy notes autonomously will produce errors. The right design handles standard orders end-to-end and routes complex ones to a staff member with the partial order pre-populated in the POS. That handoff is what keeps accuracy intact.

4. Non-Standard Item Recognition: Real callers do not speak in printed menu language. "The pasta thing from last month" and "whatever has the spicy sauce" are actual inputs. NLU models fine-tuned on restaurant-specific utterance data handle these. General-purpose models do not, and the gap shows up in order accuracy almost immediately after launch.

5. Multi-Location Scalability: For chains, this is the core argument. One trained deployment answers calls across every location simultaneously at a consistent quality level that in-store staff cannot maintain during peak hours when phones are competing with table service.

AI Voice Agent Services for Businesses: What a Custom Deployment Actually Delivers

Building a custom AI voice agent requires the right architecture, integration depth, and dialogue engineering to perform in production. Most businesses do not have that capability in-house, which is where a specialized development partner makes the difference.

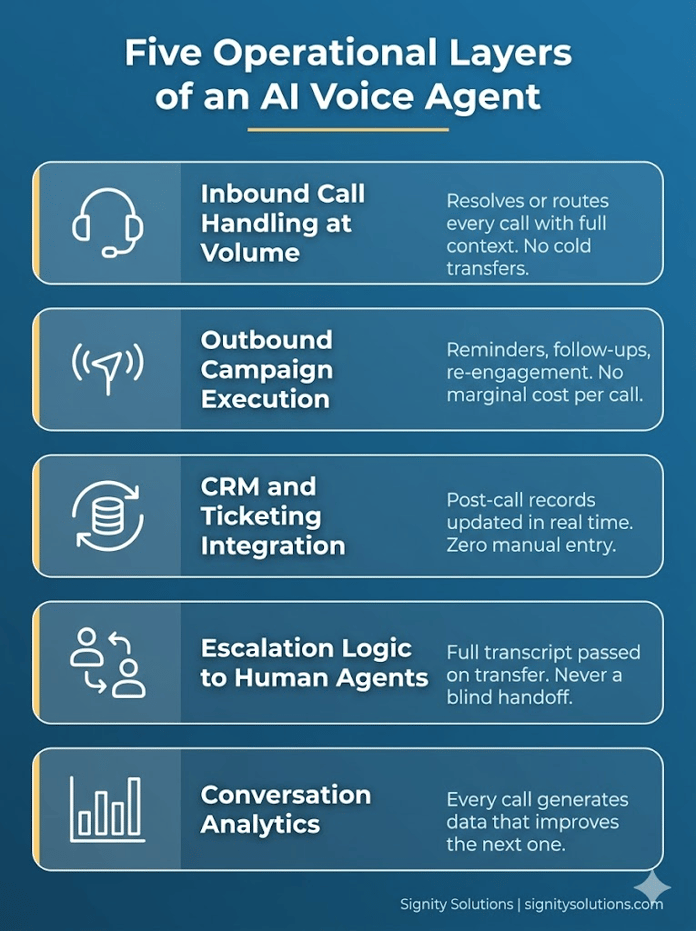

A production-grade deployment covers five operational layers, and each one matters:

1. Inbound Call Handling at Volume: The agent answers calls, authenticates the caller where required, identifies the query, and either resolves it autonomously or routes it with full context passed to the human agent. No hold queue and no cold transfer.

2. Outbound Campaign Execution: Automated outbound calls for appointment reminders, payment follow-ups, post-service check-ins, and re-engagement. These run at any volume with no additional per-call labor cost.

3. CRM and Ticketing Integration: Post-call summaries, record updates, and escalation notes written back to platforms like Salesforce, HubSpot, or Zendesk in real time. No manual data entry after the call ends.

4. Escalation Logic to Human Agents: A well-built agent knows its own limits. When a query exceeds its resolution scope, it hands off with a full transcript and context summary, not a blind transfer.

5. Conversation Analytics: Every call, resolved or not, generates structured data. Intent distributions, resolution rates, escalation triggers, and sentiment signals feed into dashboards that drive ongoing performance improvement.

Where AI Voice Agent Implementations Fail

These are not technology failures. Every one of them is a design failure, and every one is predictable.

Dialogue Flows Built on Assumptions, not Data

Teams underestimate the range of ways real callers phrase identical requests. An agent trained on ten utterances per intent will break the moment a production caller goes off-script, which happens constantly. Utterance coverage must be built from real call recordings. Post-launch optimization must run on real transcript data. Internal testing alone does not catch the edge cases that matter.

No Defined Fallback for what the Agent cannot handle

An agent that hits an unresolvable query and loops the caller through the same prompt is worse than not having deployed at all. Fallback logic, whether a graceful acknowledgment, a warm transfer, or a callback option, must be designed and tested before go-live. Teams that add this after complaints arrive are fixing a problem that should never have reached a customer.

CRM Data Quality Treated as Someone Else's Problem

Voice agents that pull from a CRM to personalize responses fail when underlying records are incomplete, duplicated, or inconsistently formatted. Those issues do not stay in the database. They surface as incorrect agent responses during live calls. Data quality is an integration prerequisite. The audit should happen before deployment, not after the first batch of escalations.

Testing only with the People who Built the System

An agent that performs well for a team of engineers in a quiet office often underperforms significantly for callers with regional accents, faster speech rates, or background noise. Pre-launch testing must include genuinely diverse speech patterns and acoustic conditions. The testing environment should resemble the production environment, not the development one.

For context on how leading organizations are building this infrastructure across verticals, this analysis of agentic AI companies driving digital transformation maps the patterns clearly.

Evaluating AI Voice Agents for Your Business?

Book a free 30-minute strategy session with our specialists to discuss your goals, infrastructure, and deployment approach.

Why Businesses Choose Signity Solutions to Build Their Voice Agent

Most businesses that struggle with AI voice agent deployments do not have a technology problem. They have a partner problem. Vendors hand over a configured platform and call it a deployment.

What your business actually needs is someone who understands the dialogue architecture, the integration requirements, the vertical-specific failure points, and the optimization work that turns a working agent into a high-performing one.

That is where Signity Solution operates. With over 80 AI voice agents built and deployed across industries, we bring something most AI agent development companies cannot offer.

Here’s what that looks like across different business types:

1. For SMBs: Fast-track deployments built around the top inbound call types, with pre-integrated CRM connectors and escalation paths tested before go-live. We build SMB deployments that go operational in weeks, not quarters, with pricing tied to actual call volume rather than enterprise licensing tiers.

2. For mid-market businesses: Custom dialogue flows mapped to specific product lines, service tiers, or regional variations. Multi-system integrations connecting the voice agent to the ticketing platform, CRM, and scheduling tool so resolution happens in one call.

3. For enterprise teams: End-to-end deployment ownership covering ASR and NLU configuration, back-end integration, compliance architecture, and structured post-launch optimization cycles. We deliver enterprise agents that consistently maintain first-call resolution rates that outperform legacy contact center benchmarks.

Across every deployment, the pattern is the same. Lower cost per resolved interaction and higher CSAT for AI-handled calls.

Whether the challenge is a single-location clinic losing patients to voicemail after hours, a restaurant group scaling phone order capacity without adding headcount, or an enterprise contact center working to move deflection rates by 30% before the next fiscal review, the architecture is different for each of them.

Frequently Asked Questions

Have a question in mind? We are here to answer. If you don’t see your question here, drop us a line at our contact page.

How do AI voice agents learn over time?

![]()

Every deployment includes post-launch optimization cycles where real call transcripts drive retraining. Agents improve continuously, not just at launch.

Can AI voice agents support multiple languages?

![]()

Yes. Signity builds multilingual voice agents with language detection built into the NLU layer. Deployment scope and accuracy targets are defined during scoping, not after.

How long does a voice agent deployment take?

![]()

Our custom deployments typically go live in four to six weeks. Timeline depends on integration complexity and the number of call flows being automated from day one.

Do AI voice agents replace human support teams?

![]()

Do AI voice agents detect caller emotions in real time?

![]()

%201-1.webp?width=148&height=74&name=our%20work%20(2)%201-1.webp)

.png?width=352&name=agentic-ai-strategy-for-enterprise-software%20(14).png)

-1.png?width=352&name=agentic-ai-strategy-for-enterprise-software%20(1)-1.png)