Flow Engineering Redefining AI Problem Solving in Software Development

Flow engineering structures AI reasoning through multi-step pipelines rather than single prompts. It uses task decomposition, verification loops, and agent-based architecture to help enterprise teams ship reliable, production-ready AI-generated code at scale.

.png?width=670&height=445&name=AI%20Solutions%20in%20Education%20A%20CXO%20Guide%20to%20Scalable%20ROI%20(3).png)

Every enterprise engineering team now has access to the same AI models. Same capabilities, same context windows, same code generation quality on paper. And yet the gap between teams shipping reliable AI-assisted software solutions and those stuck in endless revision cycles keeps widening.

Gartner predicts that by 2028, 33% of enterprise software applications will include agentic AI. The teams getting ahead of that curve have already stopped treating AI as a single-turn tool.

The models are not the variable, but the workflow is. That is the core argument behind flow engineering, and it is reshaping how serious engineering teams approach AI software development from the ground up.

When you hand a complex software problem to an AI in a single prompt, you are using a reasoning engine like a calculator. One input, one output, nothing in between. The gaps surface later, edge cases fail, and seamless integration breaks. Code that held up in isolation collapses under real conditions.

Flow engineering restructures that interaction. It gives AI technology a designed process. Plan first, decompose the problem, execute in stages, and verify at each step. The result is not just faster output. But an output built to hold up.

Generate

Key Takeaways

Generating...

Generate

Key Takeaways

Generating...

- The model is not the problem. Your AI workflow is.

- Prompt engineering breaks the moment software complexity scales beyond a single task.

- Task decomposition is the biggest lever for improving AI-generated code quality.

- Verification loops catch errors at the source before they compound downstream.

- Enterprise teams winning with AI compete on flow quality, not model access.

What Is Flow Engineering and How Is It Different from Prompt Engineering?

Prompt engineering is a skill. Flow engineering is a system.

A strong prompt extracts a better single output from a model. But it operates inside challenging constraints. One question, one answer, no persistent state, no verification of what comes out. For complex AI software development, it fails consistently because accuracy, context, and logic need to hold across many turns, not just one.

Flow engineering changes the architecture. Instead of a single prompt, there is a pipeline. A sequence of AI model calls runs through it, each with a defined role, connected by logic that governs what passes forward, what triggers a retry, and what requires human review before proceeding.

The shift is already visible in production. Cognition AI's Devin was the first AI system to complete multi-step engineering tasks autonomously by planning, searching, writing, testing, and fixing across a structured sequence. GitHub's Copilot Workspace moved in the same direction, replacing one-shot generation with a multi-step reasoning environment.

For teams evaluating AI development services and software development services partners, the question is whether they are building single-turn integrations or designing flows. That answer determines whether you get speed or speed with reliability.

What Is Task Decomposition in AI Flow Engineering and Why Does It Matter?

Large language models are not bad at complex problems. They are bad at large, unstructured problems handed over all at once.

Task decomposition breaks complex software work into smaller, model-manageable subtasks. When AI models receive well-defined pieces, model accuracy improves significantly. Context stays clean, and output holds together across the full sequence.

Consider a payments integration covering authentication, transaction processing, webhook handling, error states, and reconciliation logic. A single prompt returns something that looks complete but breaks under review. Decomposing it changes the outcome entirely.

- Validate the data schema before writing logic

- Verify authentication before building transaction flows

- Handle error states as a dedicated step

- Test each module before the next begins

Andrew Ng has written directly on this. Iterative refinement across a structured sequence consistently outperforms a single well-optimized prompt. Not by a small margin.

For teams investing in generative AI development, this is often the deciding variable between AI projects that stall before meeting key performance indicators and those that reach production.

Related Read: Strategies to Integrate Generative AI in Business Workflow Effectively

How Flow Engineering Improves AI-Generated Code Quality and Reliability

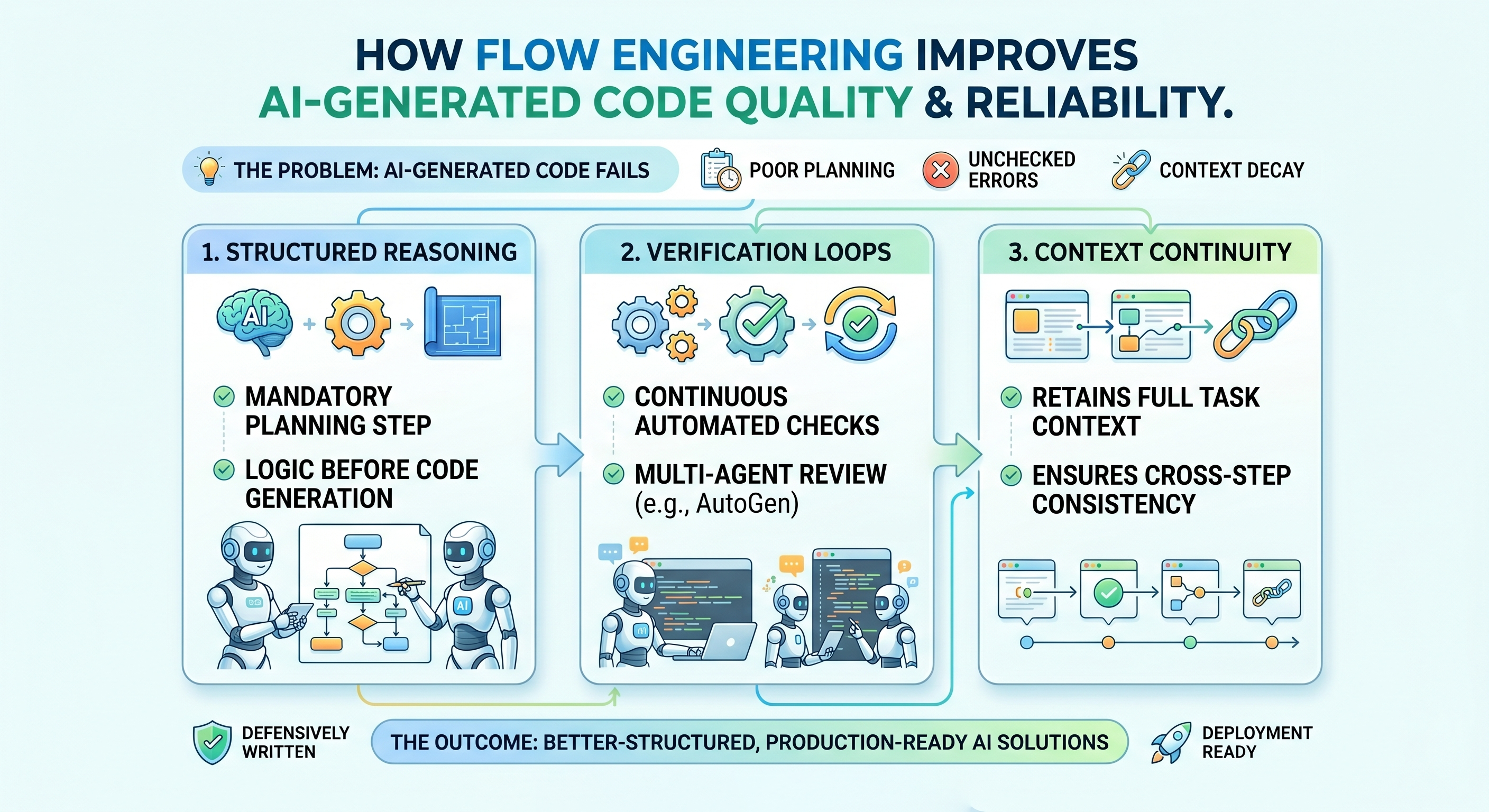

AI-generated code fails in predictable patterns. Advanced models skip planning, errors propagate unchecked, and context degrades across long tasks. A well-structured generative AI development process addresses all three.

Structured reasoning forces the AI model to plan before executing. The flow requires a defined approach, flagged ambiguities, and logic structure before any code is generated. This removes the wide category of errors that come from jumping straight to implementation.

Verification loops catch errors mid-flow. These automated checkpoints include a test runner validating code against predefined cases, a linter catching structural issues, and a second model call reviewing output against the original specification. Microsoft's AutoGen framework is built on this principle. Multiple AI agents check each other's work before output advances, with minimal human intervention required.

Context continuity ensures the model maintains a coherent view of the full task at every step, preventing the common failure in which step 4 contradicts step 1 because earlier context was diluted.

AI solutions built through this process are better structured, more defensively written, and consistently closer to production-ready on the first pass.

Struggling to move your AI projects from POC to production?

We build flow-engineered AI systems designed to scale beyond the proof-of-concept stage.

Building Blocks of a Flow Engineering System

A flow engineering system is a composed architecture built by data scientists and AI engineers, with four components working in sequence.

Orchestrators determine which step runs next, what passes forward, and when a human checkpoint should trigger. LangGraph is one of the most widely adopted frameworks for this today.

Agents execute specific subtasks within the flow. Each operates within a defined scope.

- One handles requirement parsing using natural language processing

- One handles code generation

- One handles test construction

And others can be configured for process automation, predictive maintenance, or machine learning model evaluation

Memory layers maintain state across the flow, from short-term context for the current step to long-term references covering architecture constraints and enterprise data across structured and unstructured data sources.

Tool integrations extend agent capability beyond text generation. AI model training and fine-tuning of agents for specific roles sharpens output without rebuilding the pipeline. Generative AI tools, when integrated correctly, are what separate a functional flow from a fragile one.

Why Flow Engineering Is Becoming the Default in Enterprise AI Development

Enterprise teams are rebuilding artificial intelligence workflows for one consistent reason. Single-turn AI delivered speed. It did not deliver reliability. Teams that invest in structured generative AI services consistently outperform those running ad-hoc prompt workflows at scale.

Production code has to hold under load, achieve seamless AI integration with existing systems, pass data security review, and behave predictably across edge cases. Flow-based workflows are built for exactly those requirements and align with responsible AI principles from the start.

The leading AI development companies are not competing based on model access. The differentiation is in flow quality.

- How precisely business processes are mapped at intake

- How verification loops are structured across stages

- How quickly AI solutions reach the threshold where engineers ship rather than rewrite

For technology leaders looking to develop AI and scale artificial intelligence initiatives, drive process automation, and make data-driven decisions, teams that build with flow engineering consistently reduce operational costs and achieve sustainable business growth.

Not sure where your AI development workflow is breaking down?

We help identify gaps and build production-ready AI systems from strategy to deployment.

Conclusion

The question is no longer whether artificial intelligence belongs in software development. The question is how to make it reliable enough to matter at scale.

Flow engineering answers that with structure. Decomposed tasks, defined AI agents, verification at each stage, context managed throughout. What comes out looks less like an experiment and more like a custom AI solution aligned to real business goals.

For mid-market cross-functional teams moving from experimentation to structured deployment, Signity's generative AI development practice delivers custom generative AI solutions built on this architecture. Flow-engineered, outcome-driven, and designed to hold up well past the POC.

Frequently Asked Questions

Have a question in mind? We are here to answer. If you don’t see your question here, drop us a line at our contact page.

How do AI development services streamline enterprise business operations?

![]()

Expert AI development services apply machine learning algorithms and generative AI tools to automate business processes, reduce operational expenses, improve model accuracy, and deliver measurable ROI aligned to business objectives.

How does flow engineering integrate with existing software development workflows?

![]()

Flow engineering layers structured AI pipelines over existing workflows without disruption. Teams gain seamless AI integration, stronger data security, faster AI adoption, and more reliable software solutions across development cycles.

What role do large language models play in flow engineering?

![]()

Large language models power each agent node within the flow. Combined with fine tuning and AI model training, they enable context-aware, accurate, and production-ready generative AI development across complex software tasks.

How does flow engineering support responsible AI at enterprise scale?

![]()

Flow engineering embeds verification loops, human checkpoints, and audit trails into every pipeline stage, enabling enterprises to scale AI initiatives securely while maintaining data security, responsible AI standards, and business goal alignment.

How do custom generative AI solutions improve enterprise software development?

![]()

Custom generative AI solutions enable enterprises to automate complex workflows, accelerate deep learning model performance, speed up AI implementation, and build capabilities aligned to specific business goals and key performance indicators.

What should businesses look for in an AI development partner?

![]()

A strong AI development partner brings AI consulting expertise, generative AI product development capabilities, and data science depth to design, deploy, and scale innovative solutions that integrate with existing systems seamlessly.

%201-1.webp?width=148&height=74&name=our%20work%20(2)%201-1.webp)